Strengthen cyber threat defense with 3 new ways to detect anomalies

Modern cyber attacks are designed to evade detection. Legacy, rules-based cybersecurity tools and techniques have met their match.

For example, Active Scanning, SSH Brute Force, and Domain Generation Algorithm (DGA), which are recognized in the MITRE ATT&CK® Enterprise Matrix, are three types of modern attacker techniques that can evade traditional rule-based detection mechanisms due to their flexibility and adaptability.

Consider Active Scanning. Security teams attempt to use traditional security tools to detect Active Scanning — a technique used to discover devices, systems, or services on a network by actively distributing packets or probes — and may end up causing more harm than good. In this situation, security teams could set a hard threshold to act as a tripwire that sets off an alarm if scanning surpasses a certain number. However, this rule-based defense tactic is limited. If the threshold is too high, you’ll likely miss actual threats; if the threshold is too low, you’ll be inundated with false positive alerts.

Companies that are utilizing these traditional tactics and techniques are setting themselves up for failure. Modern cybersecurity requires a new approach.

A deep dive into Lacework anomaly detection

Lacework leverages statistical models and AI to dynamically determine when something suspicious is taking place in a way that’s unique to each customer’s cloud environment. Following a data-centric approach, we achieve the best detection outcomes for our customers by placing a strong focus on the nature and quality of the data that Lacework uniquely captures, which is fed to these models for both training and inference.

Lacework recently deployed new anomaly detection methods to address the three common adversarial techniques mentioned above: Active Scanning, SSH Brute Force, and DGA. We are folding these new ad hoc detections into our industry-leading anomaly detection engine that’s the foundation of our cloud-native application protection platform (CNAPP).

These models provide signals that are further corroborated and enriched by the innovative Lacework composite alerts feature, generating high-confidence alerts for ongoing threats for our customers.

Let’s take a deep dive into the models.

Active Scanning detection

Active Scanning involves sending network traffic such as pings or port probes to various IP addresses to determine which ones are live and responding. Active Scanning can be used by both red teams and malicious actors for reconnaissance and can identify vulnerabilities in a target network or system. Identifying malicious scanning behavior allows security teams to detect potential security threats before they escalate into more advanced attacks.

While there are many types of Active Scanning, our detection methodology focuses on those that have the potential to be most malicious: when a host within the customer’s environment starts performing outbound network scanning. A sudden increase in the volume of observed network connections on a given host often represents a clear sign of such activity. Therefore, our method is based on identifying significant and sudden changes in the volume of connections initiated by hosts in the customer’s cloud environment.

A look into the methodology

Our detection method leverages network traffic data from our customers’ cloud environments and observes network behavior representative of normal network activity. The data is then used to train an unsupervised machine learning model that provides a flexible approach to modeling data distributions.

The model, which is informed by normal network behaviors for each specific customer, is used to detect anomalies as data points belonging to low-probability areas, which, in this case, may indicate suspicious scanning activities. To avoid generating false positives, we additionally established statistical baselines for hosts exhibiting elevated rates of both successful and failed connections. This measure ensures that alerts are not triggered for activities that are typically considered normal within the environment.

This end-to-end methodology was fine-tuned using an extensive corpus of true incidents in the wild, as well as simulated red team activities that mimic such attacks.

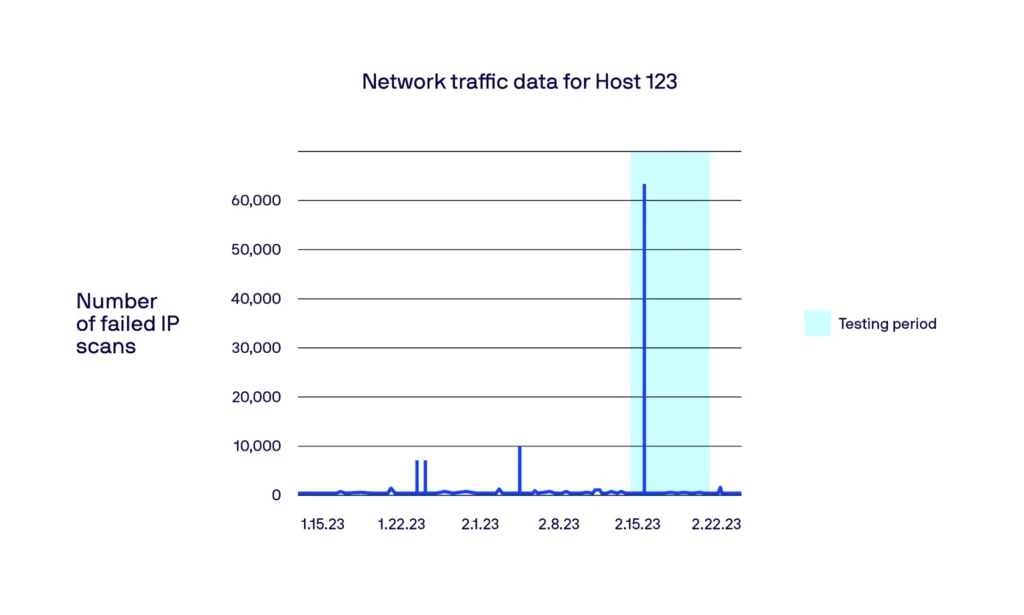

An example of Active Scanning anomaly detection

Below, we showcase a specific case of a compromised host (Host 123) initiating active scans on other hosts. Through looking at the count of unsuccessful IP scans, we observed a notable increase around February 16, 2023, which is when the incident happened. Our model successfully identified and flagged this spike during that period.

Thanks to this data-driven approach taken by Lacework, unique and nefarious scans can be better identified and flagged to customers, providing a lower mean time to triage and improved security efficacy.

SSH Brute Force detection

SSH Brute Force is a technique in which an attacker attempts to gain unauthorized access to a system by systematically trying a large number of passwords against a server. Brute forcing credentials may take place at various points during a breach, while an attacker is trying to move laterally within the environment. Our model is engineered to identify situations where a host residing within a customer’s environment, begins to issue an unusually high number of unsuccessful login attempts.

Having this SSH Brute Force attack model aids in the early identification of a security incident involving a compromised host. This understanding is critical for preserving the security and reliability of systems and networks by allowing for proactive actions against unauthorized access and the risk of data breaches.

A look into the methodology

To detect unusual numbers of unsuccessful login attempts, we leverage datasets that contain all successful and failed login attempts recorded by our platform. Using this data, we apply a statistical model to identify potential brute force attempts by flagging significant jumps in failed login attempts compared to the historical data.

More specifically, this model weaves together multiple statistical models that are dependent on the prevalence of successful and unsuccessful login attempts and the specific customer environment, respectively. To minimize false positives, we also take into account the magnitude of the jumps and analyze the overall heuristics of the environment. For instance, if the account regularly experienced high and comparable spikes, we would refrain from triggering an alert.

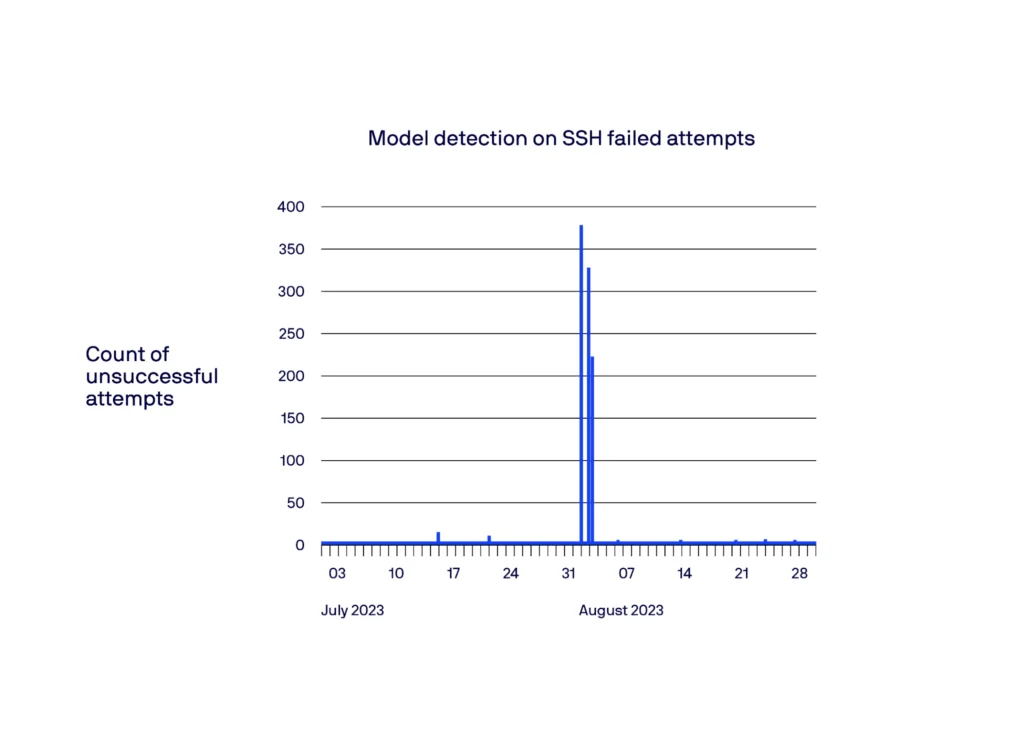

An example of SSH Brute Force anomaly detection

In the example below, we showcase a specific case of a compromised host initiating brute force attempts on other hosts. Through looking at the count of unsuccessful SSH brute force attempts, we observed a notable increase around August 2, 2023, which is when the incident happened. Our model successfully identified and flagged this spike during that period.

Domain Generation Algorithm (DGA) detection

DGA is a technique often employed by malware to evade detection and establish communication channels with Command-and-Control (C&C) servers. The technique dynamically generates a large number of seemingly random domain names, making it challenging for traditional security measures to identify and block them effectively. Traditional methods often rely on manually crafted rules or blocklists, which can quickly become outdated as new DGAs emerge.

To address this challenge, machine learning — and, more recently, deep learning — has emerged as a promising approach for DGA domain detection. In particular, the DGA domain detection problem can be approached as a text classification problem, and techniques in natural language processing (NLP) (such as embeddings, recurrent neural networks, transformers, etc.) can be adopted to form an effective solution.

A look into the methodology

To build a performant DGA detection model, we focused on the quality of the training data. We employed data coming from multiple datasets including open-source, internal proprietary and third-party sources. Our initial step involved rigorous data preprocessing, where we curated and cleaned the data to ensure its accuracy and consistency. This step is crucial in eliminating biases and noise that could potentially misguide the model development process.

Several existing deep learning architectures for DGA detection have achieved very good performance results. In our case, we decided to adopt a model consisting of an embedding layer, followed by multiple branches of long short-term memory (LSTM) and convolutional neural network (CNN) layers, which are then merged by a linear layer at the output.

Instead of simply flagging every DGA activity, which might result in alert fatigue, the system builds a baseline of DGA likelihood (a “DGA ambient noise”), specific to each individual host in a customer’s environment. The baseline also takes into account the numbers of resolved versus unresolved domains, which leverages the fact that the majority of the DGA domains do not exist and would not resolve.

A detection is fired only when the current DGA likelihood for a host deviates significantly from this established baseline. Imagine a scenario where a host typically accesses a handful of internal domains, rarely venturing out. Suddenly, the host starts churning out a barrage of DGA-generated domains. This anomalous spike in DGA activity, far exceeding the host’s baseline, would trigger an “excessive DGA” detection signal.

Putting anomaly detection to work

Looking under the hood of anomaly detection is a fascinating exercise. Our team of experts here at Lacework invested a lot of time and energy making sure that these models were built to perfection. But, at the end of the day, a tool is only as valuable as its utility in real-life scenarios. How do these three new anomalies play out in real life?

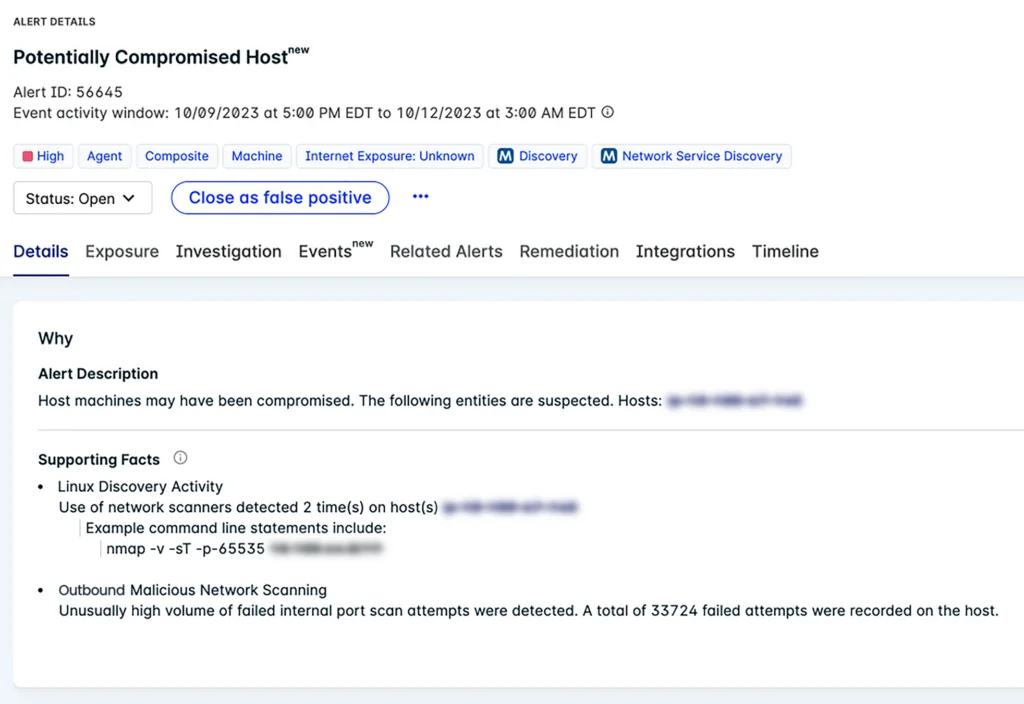

In reality, in an actual threat or red team scenario, the activities described above will likely happen within a timeline that involves other types of suspicious activities. Active Scanning, SSH Brute Force, or DGA are simply pieces to the puzzle. For this reason, the detection signals above can serve as input for composite alerts within the Lacework platform, which are able to correlate a number of weaker signals coming from a variety of data sources.

Lacework composite alerts combine low severity inputs from across your cloud to point out major issues within your environment. Composite alerts can assess your environment and automatically point out active cloud ransomware, active cloud cryptomining, compromised credentials, and more.

Specifically, the detections described in the previous sections become part of our composite alert that points out a compromised host, where the anomalies are enriched with context and information about related activity. This added context ultimately supports the anomaly and increases confidence in the generated alert.

Detect more with Lacework

These three anomalies join a host of other anomalies that can be detected within the Lacework platform. The power of anomaly detection is undeniable. With anomaly detection, security teams are enabled to detect some of the most evasive forms of malicious activity. And by not being tied down by rules and signatures, security teams are free to detect any form of suspicious activity within their environments — even the newest threats that may not yet be tied to known rules.

To dig more into the Lacework anomaly detection capabilities and their practical use, visit this website or read this white paper.

Categories

Suggested for you